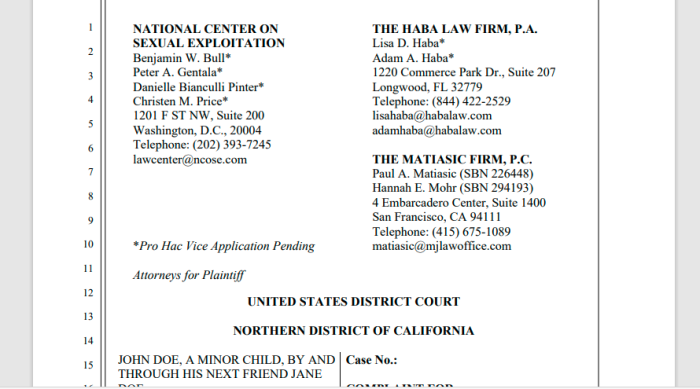

Twitter is being sued in U.S. District Court in the Northern District of California. It’s alleged that Twitter refused to take down pornographic material, even after becoming aware that minors were involved, and they were exploited. The site, endsexualexploitation.org, posted a copy of the complaint. The names were redacted in the papers to protect the identities of the family.

Just a reminder: at this point, it is just accusations against Twitter.

1. Epoch Times Interviews Plaintiff’s Lawyer

Lisa Haba, lawyer for the victim, gave an interview with Jan Jekielek of Epoch Times a few days ago. This is well worth a watch. They bring up several interesting topics, including using Section 230 as a legal defense.

2. Quotes From The Lawsuit Against Twitter

This is a civil action for damages under the federal Trafficking Victims’ Protection Reauthorization Act (“TVPRA”), 18 U.S.C. §§ 1591 and 1595, Failure to Report Child Sexual Abuse Material, 18 U.S.C. § 2258A, Receipt and Distribution of Child Pornography, 18 U.S.C. §§ 2252A, and related state law claims arising from Defendant’s conduct when it knowingly hosted sexual exploitation material, including child sex abuse material (referred to in some instances as child pornography), and allowed human trafficking and the dissemination of child sexual abuse material to continue on its platform, therefore profiting from the harmful and exploitive material and the traffic it draws.

1. Sex trafficking is a form of slavery that illegally exists in this world—both throughout the United States and globally—and traffickers have been able to operate under cover of the law through online platforms. Likewise, those platforms have profited from the posting and dissemination of trafficking and the exploitative images and videos associated with it.

2. The dissemination of child sexual abuse material (CSAM) has become a global scourge since the explosion of the internet, which allows those that seek to trade in this material to equally operate under cover of the law through online platforms.

3. This lawsuit seeks to shine a light on how Twitter has enabled and profited from CSAM on its platform, choosing profits over people, money over the safety of children, and wealth at the expense of human freedom and human dignity.

4. With over 330 million users, Twitter is one of the largest social media companies in the world. It is also one of the most prolific distributors of material depicting the sexual abuse and exploitation of children.

28. Twitter explains how it makes money from advertising services as follows:

.

We generate most of our advertising revenue by selling our

Promoted Products. Currently, our Promoted Products consist of

the following:

.

• Promoted Tweets. Promoted Tweets, which are labeled as

“promoted,” appear within a timeline, search results or profile

pages just like an ordinary Tweet regardless of device, whether it

be desktop or mobile. Using our proprietary algorithms and

understanding of the interests of each account, we can deliver

Promoted Tweets that are intended to be relevant to a particular

account. We enable our advertisers to target an audience based on

an individual account’s interest graph. Our Promoted Tweets are

pay-for-performance or pay-for-impression delivered advertising

that are priced through an auction. Our Promoted Tweets include

objective-based features that allow advertisers to pay only for the

types of engagement selected by the advertisers, such as Tweet

engagements (e.g., Retweets, replies and likes), website clicks,

mobile application installs or engagements, obtaining new

followers, or video views.

65. In 2017, when John Doe was 13-14 years old, he engaged in a dialog with someone he thought was an individual person on the communications application Snapchat. That person or persons represented to John Doe that they were a 16-year-old female and he believed that person went his school.

66. After conversing, the person or persons (“Traffickers”) interacting with John Doe exchanged nude photos on Snapchat.

67. After he did so the correspondence changed to blackmail. Now the Traffickers wanted more sexually graphic pictures and videos of John Doe, and recruited, enticed, threatened and solicited John Doe by telling him that if he did not provide this material, then the nude pictures of himself that he had already sent would be sent to his parents, coach, pastor, and others in his community.

68. Initially John Doe complied with the Traffickers’ demands. He was told to provide videos of himself performing sexual acts. He was also told to include another person in the videos, to which he complied.

69. Because John Doe was (and still is) a minor and the pictures and videos he was threatened and coerced to produce included graphic sexual depictions of himself, including depictions of him engaging in sexual acts with another minor, the pictures and videos constitute CSAM under the law.

70. The Traffickers also attempted to meet with him in person. Fortunately, an in person meeting never took place.

85. John Doe submitted a picture of his drivers’ license to Twitter proving that he is a minor. He emailed back the same day saying:

91. On January 28, 2020, Twitter sent John Doe an email that read as follows:

.

Hello,

.

Thanks for reaching out. We’ve reviewed the content, and didn’t find a violation of our policies, so no action will be taken at this time.

.

If you believe there’s a potential copyright infringement, please start a new report.

.

If the content is hosted on a third-party website, you’ll need to contact that website’s support team to report it.

.

Your safety is the most important thing, and if you believe you are in danger, we encourage you to contact your local authorities. Taking screenshots of the Tweets is often a good idea, and we have more information available for law enforcement about our policies.

.

Thanks,

Twitter

In short, the victim met someone online pretending to be someone else, and got him to send nude photos under false pretenses. The teen — which is still a minor today — was then blackmailed into sending more.

Some of this was posted on Twitter. Despite verifying the age and identity of the victim, they refused to remove the content, saying that they found no violations in their terms of services. It was only after Homeland Security stepped in, that Twitter finally complied.

Interestingly, almost half of the complaint against Twitter consists of copies of its own rules, policies, and terms of service. Twitter has rules on the books to prevent exactly this type of thing, but (allegedly) refused to act when it was brought to their attention.

The comment about “potential copyright infringement” comes across as a slap in the face. That was clearly never the concern of the child.

Twitter has not filed a response, so we’ll have to see what happens next.

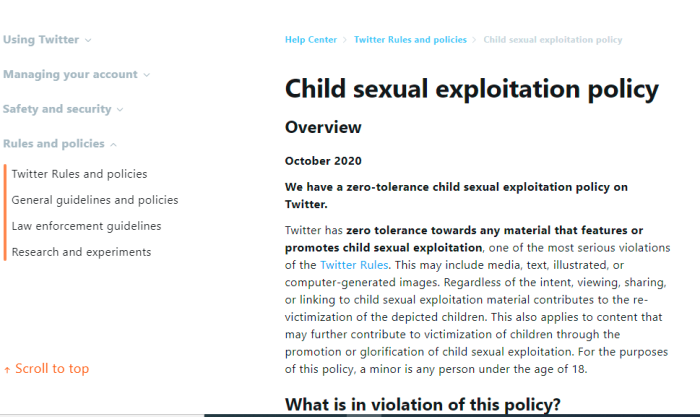

3. Current Twitter Policy On Exploiting Minors

Child sexual exploitation policy

Overview

October 2020

.

We have a zero-tolerance child sexual exploitation policy on Twitter.

.

Twitter has zero tolerance towards any material that features or promotes child sexual exploitation, one of the most serious violations of the Twitter Rules. This may include media, text, illustrated, or computer-generated images. Regardless of the intent, viewing, sharing, or linking to child sexual exploitation material contributes to the re-victimization of the depicted children. This also applies to content that may further contribute to victimization of children through the promotion or glorification of child sexual exploitation. For the purposes of this policy, a minor is any person under the age of 18.

What is in violation of this policy?

Any content that depicts or promotes child sexual exploitation including, but not limited to:

-visual depictions of a child engaging in sexually explicit or sexually suggestive acts;

-illustrated, computer-generated or other forms of realistic depictions of a human child in a sexually explicit context, or engaging in sexually explicit acts;

-sexualized commentaries about or directed at a known or unknown minor; and

-links to third-party sites that host child sexual exploitation material.

The following behaviors are also not permitted:

-sharing fantasies about or promoting engagement in child sexual exploitation;

-expressing a desire to obtain materials that feature child sexual exploitation;

-recruiting, advertising or expressing an interest in a commercial sex act involving a child, or in harboring and/or transporting a child for sexual purposes;

–sending sexually explicit media to a child;

-engaging or trying to engage a child in a sexually explicit conversation;

-trying to obtain sexually explicit media from a child or trying to engage a child in sexual activity through blackmail or other incentives;

-identifying alleged victims of childhood sexual exploitation by name or image; and

-promoting or normalizing sexual attraction to minors as a form of identity or sexual orientation.

At least on paper, Twitter has very strong policies against the sort of behaviour that is outlined in the California lawsuit. It’s baffling why Twitter wouldn’t immediately remove the content. This isn’t the hill to die on for any company.

Twitter can, and does, suspend accounts for insulting pedophiles and making comments about death or castration. Yet, this incident wasn’t against their terms of service.

4. Title 47, CH 5, SUBCHAPTER II Part I § 230

(c) Protection for “Good Samaritan” blocking and screening of offensive material

(1)Treatment of publisher or speaker

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

(2) Civil liability

No provider or user of an interactive computer service shall be held liable on account of—

(A) any action voluntarily taken in good faith to restrict access to or availability of material that the provider or user considers to be obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable, whether or not such material is constitutionally protected; or

(B) any action taken to enable or make available to information content providers or others the technical means to restrict access to material described in paragraph (1).[1]

(d) Obligations of interactive computer service

A provider of interactive computer service shall, at the time of entering an agreement with a customer for the provision of interactive computer service and in a manner deemed appropriate by the provider, notify such customer that parental control protections (such as computer hardware, software, or filtering services) are commercially available that may assist the customer in limiting access to material that is harmful to minors. Such notice shall identify, or provide the customer with access to information identifying, current providers of such protections.

The “Section 230” which is commonly referenced refers to the 1996 Communications Decency Act. This gave platforms — both existing, and ones that came later — significant legal protections. They were considered platforms, not publishers.

The distinction between platforms and publishers seems small, but is significant. Platforms are eligible for certain benefits and tax breaks, but are cannot (except in limited circumstances), be held liable. Publishers, however, can be much more discriminatory about what they allow to be shown.

The wording is such that it does give wiggle room for publishers to apply their own take on what material is considered offensive.

It has been suggested that Twitter could rely on its Section 230 protections, but that would not shield it from penalties for criminal actions. The allegations made in this lawsuit are not just civil, but criminal in nature.

While Twitter may not be liable for everything that goes on, this particular incident was brought to their attention. They asked for identification and age verification, received it, and then decided there was no violation to their terms of service. So claiming ignorance would be extremely difficult.

5. Loss On Social Media Anonymity?!

One issue not discussed as much is a potential consequence of legal actions against platforms like Twitter. Will this lead to the loss of anonymous accounts? Might identity verification come as an unintended consequence?

While no decent person wants children — or anyone — to be take advantage of, there is a certain security knowing that online and private life can be separated. This is the era of doxing, harassment and stalking, and as such, there are legitimate concerns for many people. This is especially true for those discussing more controversial and politically incorrect topics.

Do we really want things to go the way of Parler, who began demanding Government issued I.D., and then had a “data breach”?

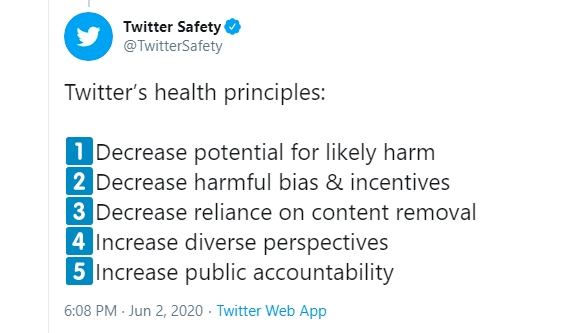

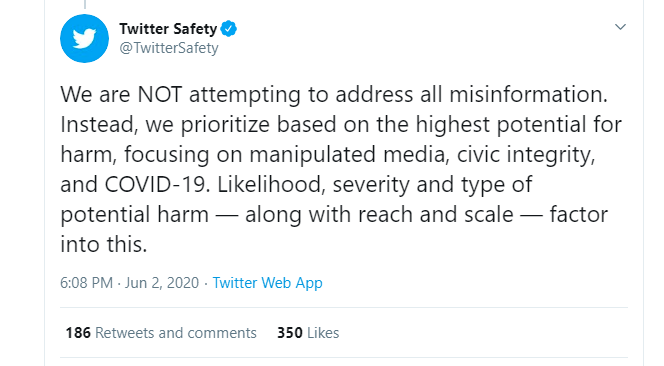

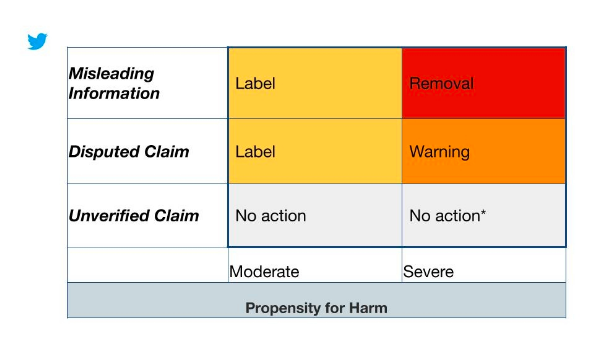

6. Twitter Policies On “Medical Misinformation”

https://twitter.com/TwitterSafety/status/1267986500030955520

https://twitter.com/Policy/status/1278095924330364935

http://archive.is/fHoLx

https://blog.twitter.com/en_us/topics/product/2020/updating-our-approach-to-misleading-information.html

This topic is brought up to show how selective Twitter’s commitment is to free speech, and to dissenting viewpoints. Even a charitable interpretation would be that there is political bias in how the rules and standardds are applied.

Strangely, Twitter takes a more thorough approach to monitoring and removing tweets and accounts for promoting “medical misinformation”. Despite there being many valid questions and concerns about this “pandemic”, far more of that is censored. Odd priorities.

Yet child porn and exploiting minors can remain up?

Twitter CP Remained Up Lawsuit Filed Statement Of Claim

Endsexualexploitation,org Website Link

Interview With Epoch Times — American Thought Leaders

Twitter T.O.S.: Child Sexual Exploitation Policies

https://archive.is/PVP1w

Twitter Medical Misinformation Policies

https://archive.is/RLwRi

Twitter Misleading Information Updates

https://archive.is/zoqrD